Tradpute

Most of what we’re calling “AI” at the product layer is just traditional computing in a trenchcoat.

I’ve been using a word for this: tradpute. Traditional computing. CPUs, deterministic logic, milliseconds-per-request, pennies-per-thousand-queries. The substrate that’s been running the internet for decades — fast, cheap, auditable, and completely incapable of hallucination.

Here’s the part nobody says out loud: the highest-value use of AI right now might be generating more tradpute.

The dump truck problem

You ask an LLM to write a SQL query. It burns a dollar of GPU time producing text that runs on your $0.001-per-query Postgres instance. You ask it to parse a date, format an address, answer a question that could have been a lookup table. These are tradpute tasks — cheap, deterministic, solved — except now they’re gated behind a $0.01-per-call inference API.

That’s the dump truck. A powerful machine, impressively large, totally capable of delivering your letter. But you’re burning 800 dollars of diesel and an operator’s day to move 50 grams of paper across town.

Using expensive compute because it’s easier than writing the tradpute yourself is not a sustainable architecture. It’s a convenience tax disguised as a technology choice.

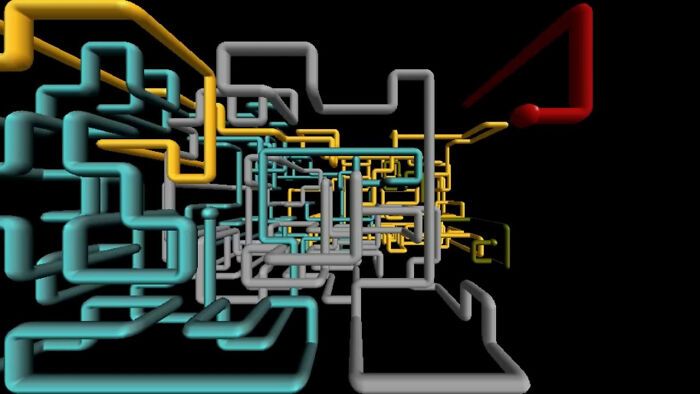

Think about the Windows 95 pipes screensaver. Pipes extending, connecting, filling the screen with purposeful plumbing — no uncertainty, no reasoning, just systems doing exactly what they were built to do, at near-zero marginal cost. That’s tradpute. That’s what’s sitting on the other end of every AI call that didn’t need to be one.

The pipes don’t think. That’s the point. Thinking is expensive. Pipes are cheap.

The subsidy that won’t last

Consumer inference pricing today is heavily subsidized. The hyperscalers and labs are racing for adoption, not margin. You are not paying the real cost of the compute. Eventually, you will.

When that normalization happens, a lot of “AI-powered” products will discover that their utility — real as it is — doesn’t justify the inference cost at market rates. That’s the bubble. Not a hype bubble about what AI can do. A cost-structure bubble about what it costs to run.

The products that survive that reckoning will be the ones where AI touched the critical path as little as possible. Where AI generated the code, the config, the logic — and then got out of the way so tradpute could run it a billion times for almost nothing.

What AI is actually for

The correct use of AI is to lower the barrier to creating tradpute.

Write the query once. Run it forever. Generate the parser, the formatter, the cron job, the IaC template — with AI assist, in a tenth of the time. Then ship tradpute. The AI ran once. The tradpute runs at scale, cheaply, reliably, without a GPU in sight.

This is the compound. AI as a force multiplier on traditional computing, not a replacement for it.

The framing matters because “AI-powered” has become an architecture decision masquerading as a marketing line. Is AI in your critical path at runtime? Or did AI help you build something that no longer needs it to run?

The former is expensive and fragile. The latter is leverage.

Why tradpute needs a name

“Traditional computing” sounds defensive. Like you’re apologizing for not being modern. So: tradpute. A first-class noun for a first-class category.

Tradpute is not legacy. It’s not dumb. It’s the 99% of compute that happens after the AI has left the room — and it’s what you want your system doing most of the time, because it’s fast, it’s cheap, and it does exactly what you told it to.

AI is a tool for creating and enhancing tradpute. When AI becomes the tradpute — when it’s in the hot path of every request, doing things a lookup table could do — you’ve got a dump truck problem.

The pipes don’t need to think. Let them flow.